The Growing Gap Between LLM Use And LLM Traffic

AI search platforms like ChatGPT, Gemini, and Claude (LLMs) are seeing increased usage but very little referral traffic, and many marketers are left scratching their heads about how to measure it and what to do to boost traffic. But both of those are the wrong questions altogether.

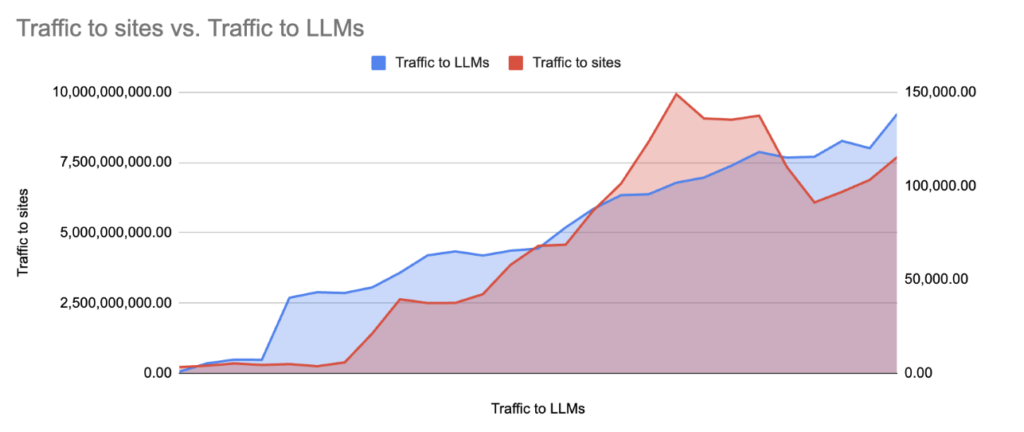

ChatGPT’s monthly active users sit at 810 million. Yet across major industries, AI search platforms drive an average of just 1% of total web traffic, and that figure has remained essentially flat from July 2025 to March 2026, even as LLM usage soared.

This trend tells us two things: LLM traffic won’t continue growing forever, and we might be closer to its ceiling than we might have believed 6 months ago. Second, this is true despite the overall influence of LLMs continuing to grow.

The result: we need to become better at measuring the impact of LLMs than just measuring their referral traffic.

What the Traffic Data Actually Shows

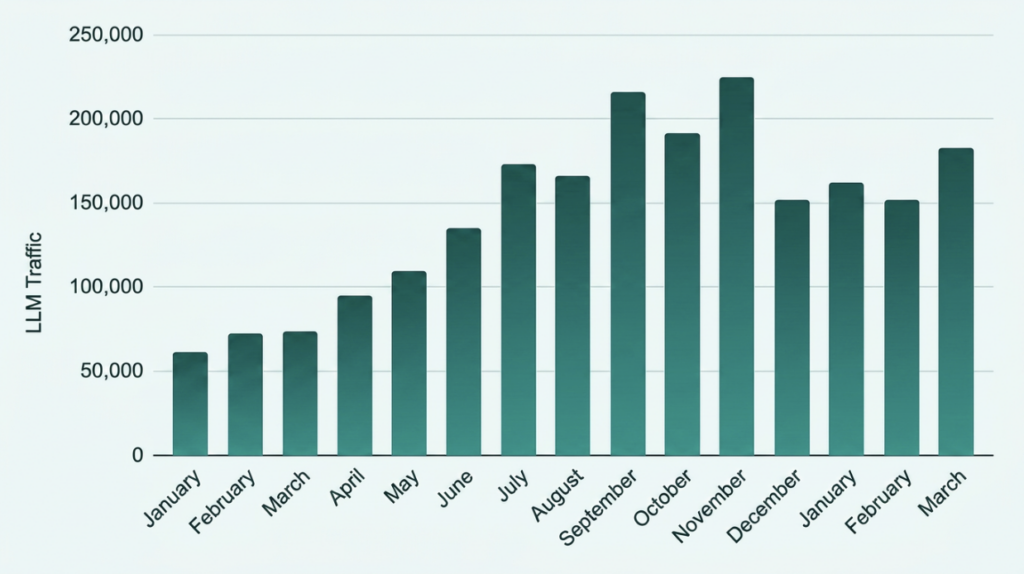

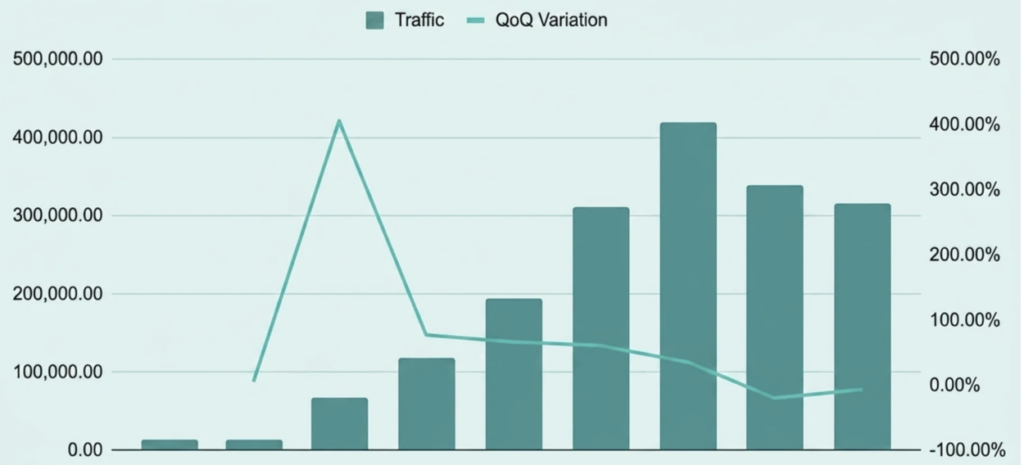

LLM referral traffic is up from 2025, but declined over the two most recent quarters. We analyzed 21 anonymised clients spanning B2B, B2C, e-commerce, and SaaS; the average indexed traffic grew 163% from January 2025 to January 2026. The summer surge was real: traffic increased by 252% from Jan to Aug ‘25, but then decreased and plateaued after November.

But there’s a more nuanced story behind the data:

- Quarterly LLM traffic declined in Q4 ‘25 for the first time, and then in Q1 ‘26 again.

- If we compare June to Jan, traffic to LLMs grew by 30% while referral traffic from LLMs decreased by 22%.

- ChatGPT accounts for the biggest share of referral traffic, but it swings wildly. Referral traffic to clients in September and November almost doubled to ~22 per million visitors to ChatGPT, versus a pretty constant rate of ~12 visits per million before.

- Traffic also varies by LLM: ChatGPT sends more traffic 3x more traffic than Gemini. Changes in distribution will likely have an impact on total referral traffic from LLMs.

- Claude tripled its platform traffic in March following a surge in mainstream adoption. The traffic it sent increased to 41 referred visits per million. This could be a one-off, but also a more general trend of users leveraging this LLM for informational and navigational purposes beyond its usual coding focus.

How much traffic do LLMs actually send?

Across industries, LLMs currently drive around 1% of total website traffic, with ChatGPT sending roughly a dozen visits per million users on average. While usage of these platforms continues to grow, referral traffic has plateaued, making it a limited and unreliable metric for performance.

Why is AI traffic so low?

Traffic from AI Answer Engines like ChatGPT, Gemini, and Claude is low because their primary function is to answer questions directly, not send users to external websites. In most cases, users get what they need within the response, which eliminates the need to click. So only a small fraction of interactions—often around 1% or less—turn into referral visits.

Traffic from LLMs to the sites in our dataset versus Traffic to LLMs

After peaking in September/October 2025, the average index drifted back through Q1 2026. Growth has not collapsed, but it has stalled. Chasing that number will become increasingly unrewarding.

Our findings are compatible with those from Kevin Indig, growth strategist and independent researcher, who back last November indicated that LLM traffic was shrinking.

Is referral traffic a good KPI for AI search?

No—referral traffic is not a reliable KPI for AI search. It measures only the small percentage of users who click through, while ignoring the majority of interactions that happen without a visit. Referral traffic measures the byproduct of LLM visibility, not visibility itself. It captures only those interactions where a user clicked through, which are systematically in the minority.

Referral numbers are also influenced much more by changes in model output and changes in how models handle external links than by visibility across a given model.

Why don’t users click links in ChatGPT responses?

Users don’t click links in ChatGPT because the platform is designed to resolve queries within the interface. Unlike traditional search engines, where clicking is required to access information, LLMs deliver complete answers directly, reducing the need for external navigation.

3 structural reasons referral traffic understates LLM activity:

- Zero-click is the default. Research from industry players and summarized by Peec.ai shows that 40 out of 100 Google searches will produce a click, but in LLMs, that number can be as low as 1 in every 100 searches.

- Attribution is fragmentary. Many LLM interactions happen inside apps, APIs, voice interfaces, and browser extensions that never pass referrer data. The chatgpt.com domain you see in GA4 is the tip of a much larger iceberg.

The function of this channel isn’t traffic generation. The absolute volume of traffic from AI engines will be way lower than their capacity to influence direct traffic via increased brand and product awareness and dark search traffic (same as dark social but for LLMs).

If your competitor appears in every relevant ChatGPT response and you don’t, but neither of you shows significant LLM referral traffic in GA4, you are losing in terms of visibility, but your dashboards won’t tell you that.

AI Search as an Awareness Channel

LLMs occupy the same strategic territory as earned media, PR, and brand advertising: they build salience, trust, and consideration before a purchase decision is made. The right analogy isn’t paid search; it’s the moment someone asks a friend for a recommendation.

Brands appearing in AI responses for high-intent queries, like “best legal software for a growing firm” or “top supplements for endurance athletes” are being shaped into the consideration set before a single click is fired. That’s top-of-funnel brand work, not a traffic channel.

The Measurement Gap and Why It Matters Now

The difficulty of measurement isn’t a reason to deprioritise the channel; it’s a reason to invest in better measurement now, while the field is still forming.

The current toolkit’s limits:

- GA4 / analytics referral data: Useful as a directional signal. Structurally incomplete due to zero-click behaviour, dark traffic, and the absence of in-app/API referral data. While it’s not an indicator of LLM visibility, it can showcase changes in LLM adoption or interface changes within the LLMs that may cause changes in traffic patterns.

- Dedicated LLM visibility tools (Profound, Peec): These tools query LLMs at scale across curated prompt sets and measure how often your brand is mentioned, cited, and in what position relative to competitors. Share of Voice and visibility are emerging as the primary metric.

- Manual prompt auditing: Still valuable for qualitative insight. Testing how you’re described, what competitors appear alongside you, and what sources the model is citing.

Why imperfect measurement beats no measurement: Rand Fishkin published a study on SparkToro detailing how, despite its incredibly high variability, tracking LLM prompts is a great tool for directional awareness.

All of this reinforces the notion that visibility percent, across loads of prompts, whether written by humans or generated synthetically, are likely to be decent proxies for how brands actually show up in real AI answers.

-Rand Fishkin

Best Practices for Measuring LLM Visibility

What should marketers focus on instead of traffic?

Marketers should focus on visibility, representation, and influence within AI-generated answers. The key question is not how much traffic LLMs drive, but whether your brand appears when potential customers ask relevant questions. Success in AI search means being included in the consideration set before a user ever clicks.

How to Measure AI Search/LLM Visibility: A Framework

Instead, here’s a practical framework for marketing directors to take and run with, structured as a hierarchy in order of AI maturity:

Step 1: Low maturity: Foundational (what everyone should be doing)

- Segment LLM referral traffic in GA4/analytics using a maintained source list (chatgpt.com, claude.ai, perplexity.ai, gemini.google.com, copilot.microsoft.com, grok.com, etc.)

- Be aware of changes in LLM traffic composition and sudden shifts; they might indicate changes in your audience or the LLMs.

- Audit your robots.txt and JS to ensure you’re not inadvertently preventing crawling and indexation by AI crawlers.

Step 2: Medium maturity: Systematic visibility tracking

- Deploy a dedicated GEO/AEO monitoring tool. Prioritize those models used by your audience (e.g. is someone really looking for you in Copilot or Perplexity?).

- Identify the main areas for your business and monitor them using real prompts (if you have access to real research) or synthetic ones. Distrust volume estimations from tools; they’re mostly made up. Focus on thorough tagging.

- Ask which metrics are useful beyond SOV/visibility. Position and sentiment can be relevant, but they aren’t always.

Step 3: High maturity: Optimisation and attribution

- Survey new customers on AI influence during discovery so you can extrapolate the value of LLMs in sales and lead generation.

- Deep dive into referral and direct traffic at moments of growing LLM use. This will help identify patterns where LLM referral is showing up as direct or other types of referral.

- Monitor for any big swings in specific competitors and identify the strategies that are working for your audience and industry.

Measure What the Channel Actually Does

The right question isn’t “how much traffic did we get from ChatGPT this month?” It’s “how is our brand being represented when potential customers ask AI systems for help in our category?”

Traffic will follow visibility, sometimes with a lag, sometimes indirectly, and sometimes not in ways that GA4 will even capture. Brands that invest in measuring and managing LLM visibility now will build an asymmetric advantage as AI search continues to become another key channel for discovery and evaluation.

Our own data shows LLM referral traffic has peaked around November and plateaued. That plateau isn’t a ceiling on the channel’s importance; it’s evidence that referral traffic was never the right lens to begin with.

Find more about Intrepid Digital’s AI Services, including GEO (Generative Engine Optimization).